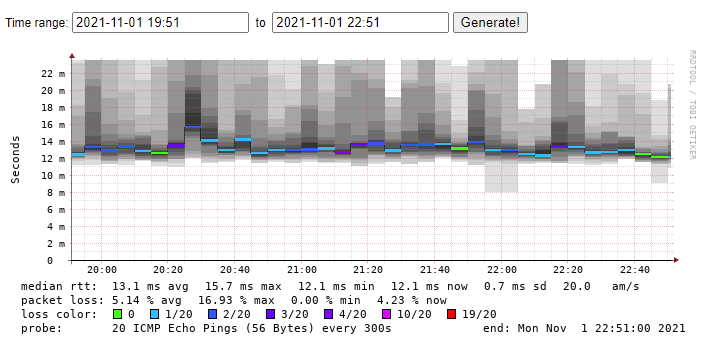

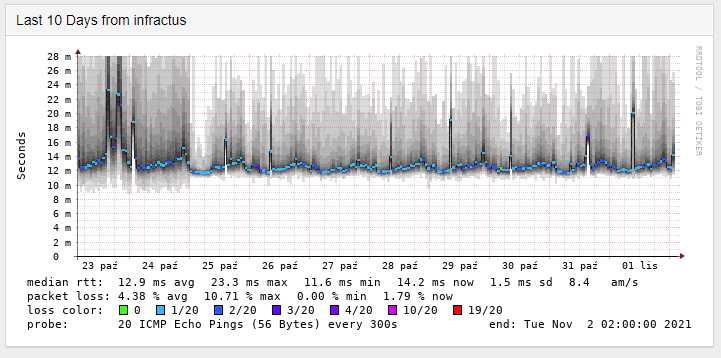

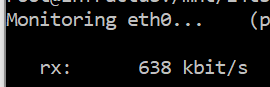

Ok, so INEA.pl is on the list of one of the worst providers ever. They provide you with a cable modem & cable connection with something like 10%-20% packet loss (when you are lucky. FOR REAL.). I occasionally backup my computer (200mbps upload), to my computer on INEA network (30mbps advertised download). Unfortunately, the speeds I am reaching are approximately 800kbps. So I started to wonder, can I make it better?

Ok, so I figured, if I do a couple of changes, the speed might increase.

- increase receiving buffer size (and a corresponding sending window) – with the bigger window, I can handle larger packet loss. I don’t quite know the linux internals (and in particular how SACK would optimize the buffer), but assuming 2 retransmissions and RTT of 200ms, I need a buffer of 600ms worth of data, rounding it up to 1 second, means a ~2MB buffer.

- play with congestion control (on the sender). BBR is more agnostic to packet loss, so it should handle packet loss better, in theory, so I want to give it a try.

Receiving Side

# Maximum input queue size (number of packets).

# I did not give much thought to this value :)

sysctl -w net.core.netdev_max_backlog=30000

# 2 MB receiving buffer

sysctl -w net.core.rmem_max=2097152Sending side

modprobe -v -a tcp_bbr # by default not loaded in Debian

sysctl -w net.ipv4.tcp_congestion_control=bbr

sysctl -w net.core.default_qdisc=fq

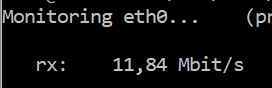

sysctl -w net.core.wmem_max=2097152This was enough to bring up the download speed from ~80-100KB/s to 1.2MB/s.

This is a dramatic difference. Note that just changing the buffer sizes is not enough. Somehow CUBIC is not able to deal with such high packet loss. CUBIC generally treats packet loss as a congestion event, which is not really the case here.

This really confirms the observations from this paper

While we find that BBR achieves high goodput in shallow buffers, we observe that BBR’s packet loss can be several orders of magnitude higher than that of Cubic when the buffers are shallow

https://www3.cs.stonybrook.edu/~arunab/papers/imc19_bbr.pdf

One thing that worries me a bit is that the congestion control algorithm is global for the entire machine – this computer is my NAS server, and I need to experiment how changing the congestion control from CUBIC to BBR impacts speed of my nas on a 10gbe network.

Leave a Reply